Exporting Segment Anything, MobileSAM, and Segment Anything 2 into ONNX format for easy deployment.

Supported models:

- Segment Anything 2 (Tiny, Small, Base, Large) - Note: Experimental. Only image input is supported for now.

- Segment Anything (SAM ViT-B, SAM ViT-L, SAM ViT-H)

- MobileSAM

Requirements:

- Python 3.10+

From PyPi:

pip install torch==2.4.0 torchvision --index-url https://download.pytorch.org/whl/cpu

pip install samexporterFrom source:

pip install torch==2.4.0 torchvision --index-url https://download.pytorch.org/whl/cpu

git clone https://github.com/vietanhdev/samexporter

cd samexporter

pip install -e .- Download Segment Anything from https://github.com/facebookresearch/segment-anything.

- Download MobileSAM from https://github.com/ChaoningZhang/MobileSAM.

original_models

+ sam_vit_b_01ec64.pth

+ sam_vit_h_4b8939.pth

+ sam_vit_l_0b3195.pth

+ mobile_sam.pt

...

- Convert encoder SAM-H to ONNX format:

python -m samexporter.export_encoder --checkpoint original_models/sam_vit_h_4b8939.pth \

--output output_models/sam_vit_h_4b8939.encoder.onnx \

--model-type vit_h \

--quantize-out output_models/sam_vit_h_4b8939.encoder.quant.onnx \

--use-preprocess- Convert decoder SAM-H to ONNX format:

python -m samexporter.export_decoder --checkpoint original_models/sam_vit_h_4b8939.pth \

--output output_models/sam_vit_h_4b8939.decoder.onnx \

--model-type vit_h \

--quantize-out output_models/sam_vit_h_4b8939.decoder.quant.onnx \

--return-single-maskRemove --return-single-mask if you want to return multiple masks.

- Inference using the exported ONNX model:

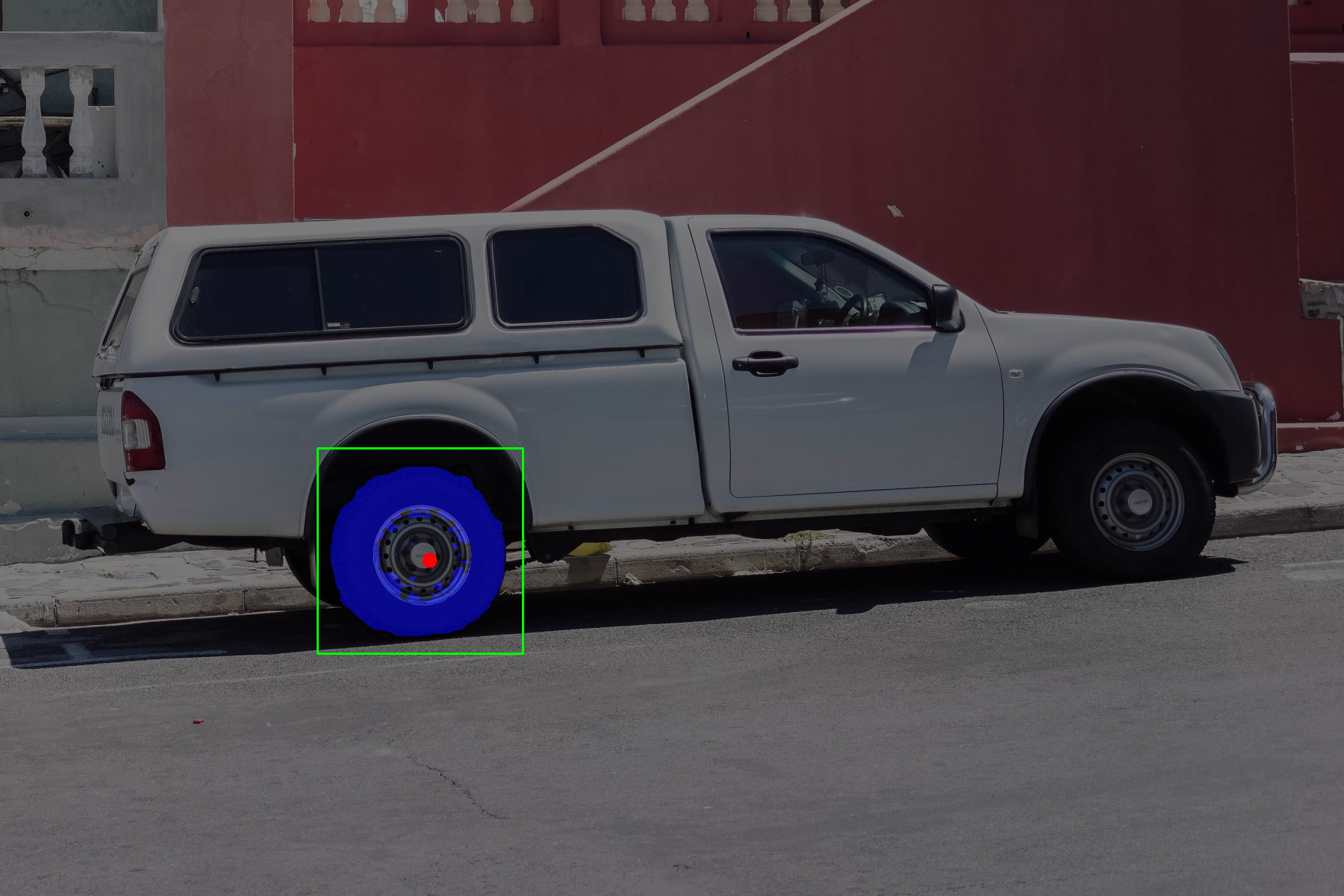

python -m samexporter.inference \

--encoder_model output_models/sam_vit_h_4b8939.encoder.onnx \

--decoder_model output_models/sam_vit_h_4b8939.decoder.onnx \

--image images/truck.jpg \

--prompt images/truck_prompt.json \

--output output_images/truck.png \

--showpython -m samexporter.inference \

--encoder_model output_models/sam_vit_h_4b8939.encoder.onnx \

--decoder_model output_models/sam_vit_h_4b8939.decoder.onnx \

--image images/plants.png \

--prompt images/plants_prompt1.json \

--output output_images/plants_01.png \

--showpython -m samexporter.inference \

--encoder_model output_models/sam_vit_h_4b8939.encoder.onnx \

--decoder_model output_models/sam_vit_h_4b8939.decoder.onnx \

--image images/plants.png \

--prompt images/plants_prompt2.json \

--output output_images/plants_02.png \

--showShort options:

- Convert all Segment Anything models to ONNX format:

bash convert_all_meta_sam.sh- Convert MobileSAM to ONNX format:

bash convert_mobile_sam.sh- Download Segment Anything 2 from https://github.com/facebookresearch/segment-anything-2.git. You can do it by:

cd original_models

bash download_sam2.shThe models will be downloaded to the original_models folder:

original_models

+ sam2_hiera_tiny.pt

+ sam2_hiera_small.pt

+ sam2_hiera_base_plus.pt

+ sam2_hiera_large.pt

...

- Install dependencies:

pip install git+https://github.com/facebookresearch/segment-anything-2.git- Convert all Segment Anything models to ONNX format:

bash convert_all_meta_sam2.sh- Inference using the exported ONNX model (only image input is supported for now):

python -m samexporter.inference \

--encoder_model output_models/sam2_hiera_tiny.encoder.onnx \

--decoder_model output_models/sam2_hiera_tiny.decoder.onnx \

--image images/plants.png \

--prompt images/truck_prompt_2.json \

--output output_images/plants_prompt_2_sam2.png \

--sam_variant sam2 \

--show- Use "quantized" models for faster inference and smaller model size. However, the accuracy may be lower than the original models.

- SAM-B is the most lightweight model, but it has the lowest accuracy. SAM-H is the most accurate model, but it has the largest model size. SAM-M is a good trade-off between accuracy and model size.

This package was originally developed for auto labeling feature in AnyLabeling project. However, you can use it for other purposes.

This project is licensed under the MIT License - see the LICENSE file for details.

- ONNX-SAM2-Segment-Anything: https://github.com/ibaiGorordo/ONNX-SAM2-Segment-Anything.