ORB-SLAM2-E is a tunned version of ORB-SLAM2 that has been readied to work in deformable environments by approximating the rigid model. The project is the ressult of a Bachelor's Degree Final Thesis in Mechanical Engineering at Universidad de Zaragoza. Its main goal is to provide the capability of relocating the camera in deformable environments for which finite elements models have been embeeded in the non-linear optimization processes.

Author: [Íñigo Cirauqui Viloria] Director: J. M. M. Montiel

[TFG] Íñigo Cirauqui. Deformable Loop_Closure Detection in Medical Endoscope Sequences (CS: Detección de Cierre de Blucles Deformables en Secuencias de Endoscopia Médica). Trabajo de Fin de Grado, Escuela de Ingeneiría y Arquitectura. Universidad de Zaragoza, 2021.

Authors: Raul Mur-Artal, Juan D. Tardos, J. M. M. Montiel and Dorian Galvez-Lopez (DBoW2)

ORB-SLAM2 is a real-time SLAM library for Monocular, Stereo and RGB-D cameras that computes the camera trajectory and a sparse 3D reconstruction (in the stereo and RGB-D case with true scale). It is able to detect loops and relocalize the camera in real time. We provide examples to run the SLAM system in the KITTI dataset as stereo or monocular, in the TUM dataset as RGB-D or monocular, and in the EuRoC dataset as stereo or monocular. We also provide a ROS node to process live monocular, stereo or RGB-D streams. The library can be compiled without ROS. ORB-SLAM2 provides a GUI to change between a SLAM Mode and Localization Mode, see section 9 of this document.

[Monocular] Raúl Mur-Artal, J. M. M. Montiel and Juan D. Tardós. ORB-SLAM: A Versatile and Accurate Monocular SLAM System. IEEE Transactions on Robotics, vol. 31, no. 5, pp. 1147-1163, 2015. (2015 IEEE Transactions on Robotics Best Paper Award). PDF.

[Stereo and RGB-D] Raúl Mur-Artal and Juan D. Tardós. ORB-SLAM2: an Open-Source SLAM System for Monocular, Stereo and RGB-D Cameras. IEEE Transactions on Robotics, vol. 33, no. 5, pp. 1255-1262, 2017. PDF.

[DBoW2 Place Recognizer] Dorian Gálvez-López and Juan D. Tardós. Bags of Binary Words for Fast Place Recognition in Image Sequences. IEEE Transactions on Robotics, vol. 28, no. 5, pp. 1188-1197, 2012. PDF

ORB-SLAM2/ORB-SLAM2-E are released under a GPLv3 license. For a list of all code/library dependencies (and associated licenses), please see Dependencies.md.

For a closed-source version of ORB-SLAM2 for commercial purposes, please contact the authors: orbslam (at) unizar (dot) es. For a closed-source version of ORB-SLAM2-E for commercial purposes, please contact the authors: icirauqui (at) gmail (dot) es.

We have tested the library in 16.04 and 20.04, but it should be easy to compile in other platforms. A powerful computer (e.g. i7) will ensure real-time performance and provide more stable and accurate results.

We use the new thread and chrono functionalities of C++11.

We use Pangolin for visualization and user interface. Dowload and install instructions can be found at: https://github.com/stevenlovegrove/Pangolin.

We use OpenCV to manipulate images and features. Dowload and install instructions can be found at: http://opencv.org. Required at leat 2.4.3. Tested with OpenCV 2.4.11 and OpenCV 3.2.

Required by g2o (see below). Download and install instructions can be found at: http://eigen.tuxfamily.org. Required at least 3.1.0.

We use modified versions of the DBoW2 library to perform place recognition and g2o library to perform non-linear optimizations. Both modified libraries (which are BSD) are included in the Thirdparty folder.

We provide some examples to process the live input of a monocular, stereo or RGB-D camera using ROS. Building these examples is optional. In case you want to use ROS, a version Hydro or newer is needed.

Required for the Finite Element modelling. Download and install instructions can be found at: https://pointclouds.org/.

Clone the repository:

git clone https://github.com/icirauqui/ORB_SLAM2_E.git

We provide a script build.sh that deploys the software, please make sure you have installed all required dependencies (see section 2). Execute:

cd ORB_SLAM2

chmod +x build.sh

./build.sh 0

This will create libORB_SLAM2_E.so at lib folder and the executables mono_tum, mono_kitti, rgbd_tum, stereo_kitti, mono_euroc and stereo_euroc in Examples folder.

For a monocular input from topic /camera/image_raw run node ORB_SLAM2_E/MonoE. You will need to provide the vocabulary file and a settings file.

rosrun ORB_SLAM2_E MonoE PATH_TO_VOCABULARY PATH_TO_SETTINGS_FILE

With main node running you'll have to feed a rosbag file with the images, alternativelly you can use live feed from an usb camera, for which ROS's usb_cam package mus be installed.

rosbag play bag_name.bag

You will need to create a settings file with the calibration of your camera. See the settings file provided as examples. We use the calibration model of OpenCV. See the examples to learn how to create a program that makes use of the ORB-SLAM2-E library and how to pass images to the SLAM system.

You can change between the SLAM and Localization mode using the GUI of the map viewer.

This is the default mode. The system runs in parallal three threads: Tracking, Local Mapping and Loop Closing. The system localizes the camera, builds new map and tries to close loops.

This mode can be used when you have a good map of your working area. In this mode the Local Mapping and Loop Closing are deactivated. The system localizes the camera in the map (which is no longer updated), using relocalization if needed.

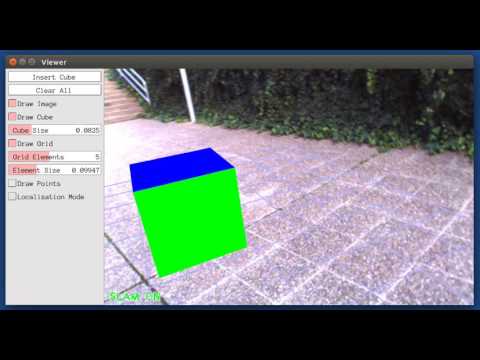

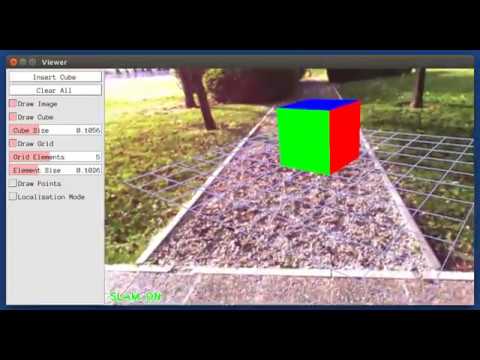

You can enable "FEA" so the Finite Element Model is represented in both the map and the image. If you enable *Debug Mode" extra information about the process and deformation data is displayed in the terminal.